How to Improve G Network Settings for Floating Point

In the realm of deep learning, computational efficiency is of utmost importance. One key metric to measure the computational workload of a neural network is the Giga Floating-Point Operations Per Second (GFLOPs). In PyTorch, understanding and optimizing for GFLOPs can significantly enhance the performance of your models, especially when dealing with large-scale datasets and complex networks.

Optimizing G Network Settings for Floating Point

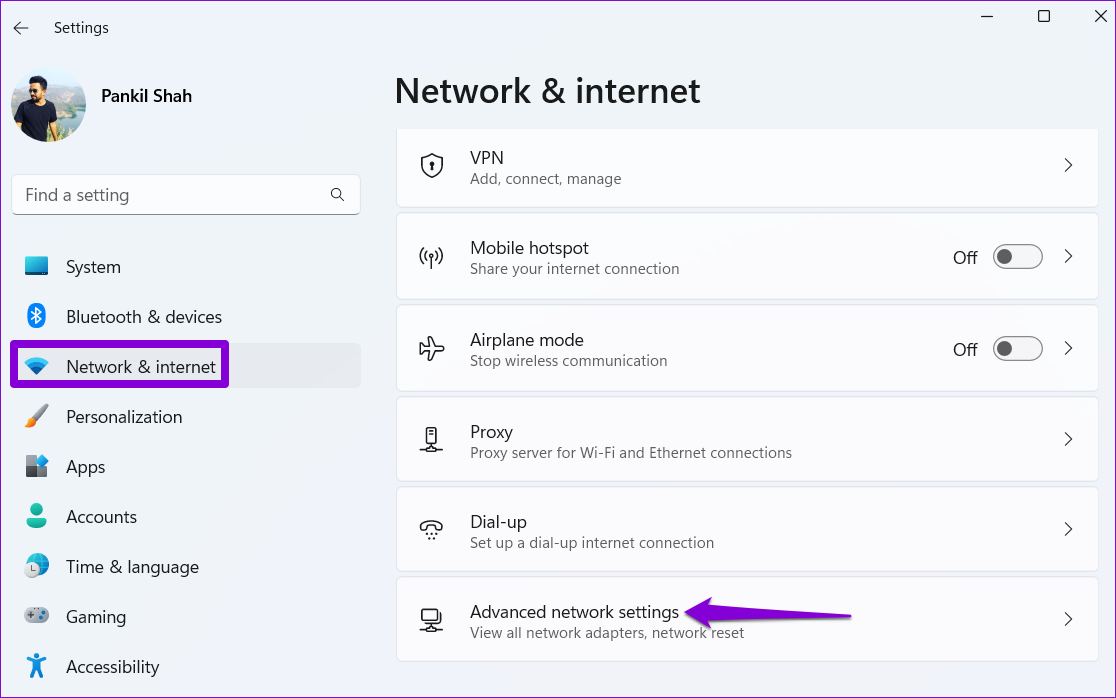

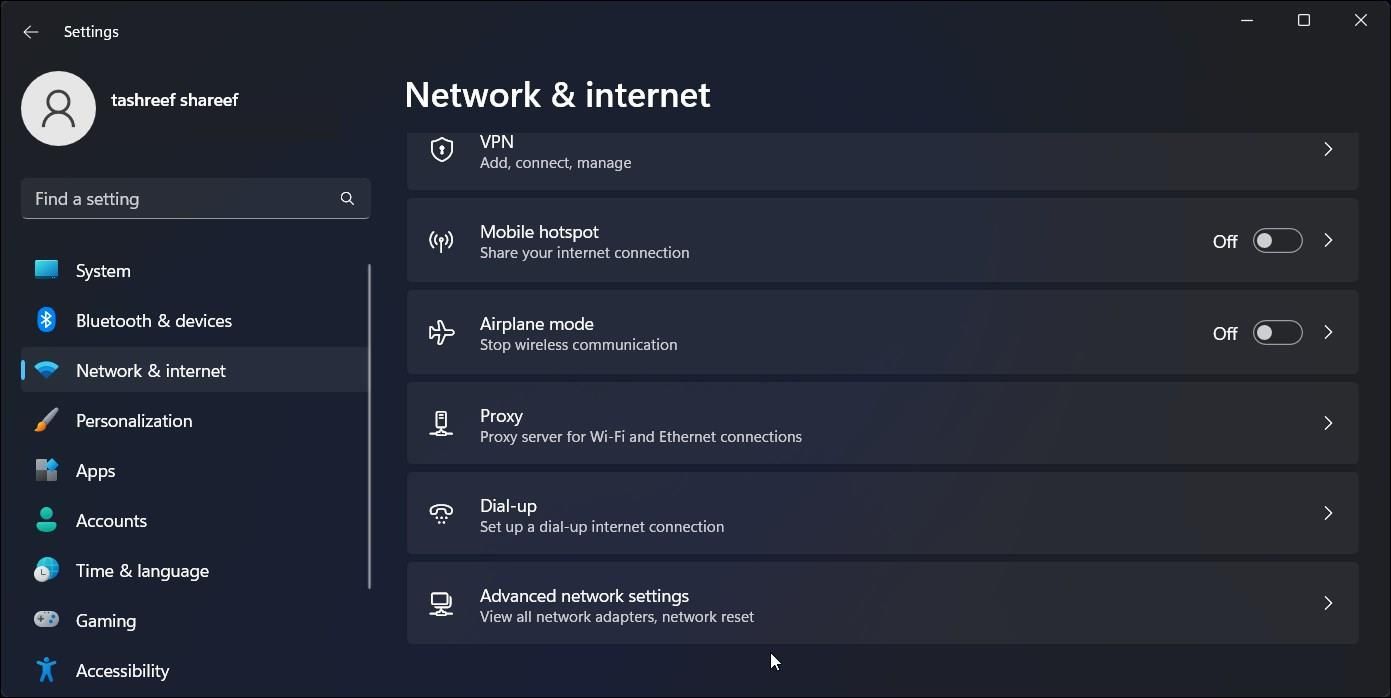

To improve the G network settings for floating point, there are several key considerations that you must take into account. The first step is to ensure that the network is properly configured to handle floating point operations. This involves setting the following parameters:

- Number of parallel operations that can be executed simultaneously, which is typically set to the number of available processing units in the system.

- Number of floating point operations that can be executed per clock cycle.

- Size of the floating point data type used for calculations.

Choosing the Right Data Type for G Network Settings

The size of the floating point data type used for calculations plays a crucial role in determining the performance of the G network. The most common floating point data types used in neural networks are float16, float32, and float64. Each of these data types has its own advantages and disadvantages, and the choice of which to use depends on the specific requirements of the network.