Unlocking the Secrets of AI: Understanding AI Explainability Techniques

Artificial Intelligence (AI) has revolutionized numerous aspects of our daily lives, from predictive text on our smartphones to complex decision-making systems in healthcare and finance. While AI has shown remarkable accuracy and efficiency, it is often criticized for being a 'black box,' particularly when it comes to complex models like deep learning and large language models (LLMs)

AI explainability also helps an organization adopt a responsible approach to AI development. As AI becomes more advanced, humans are challenged to comprehend and retrace how the algorithm came to a result. The whole calculation process is turned into what is commonly referred to as a "black box" that is impossible to interpret

What are AI Explainability Techniques?

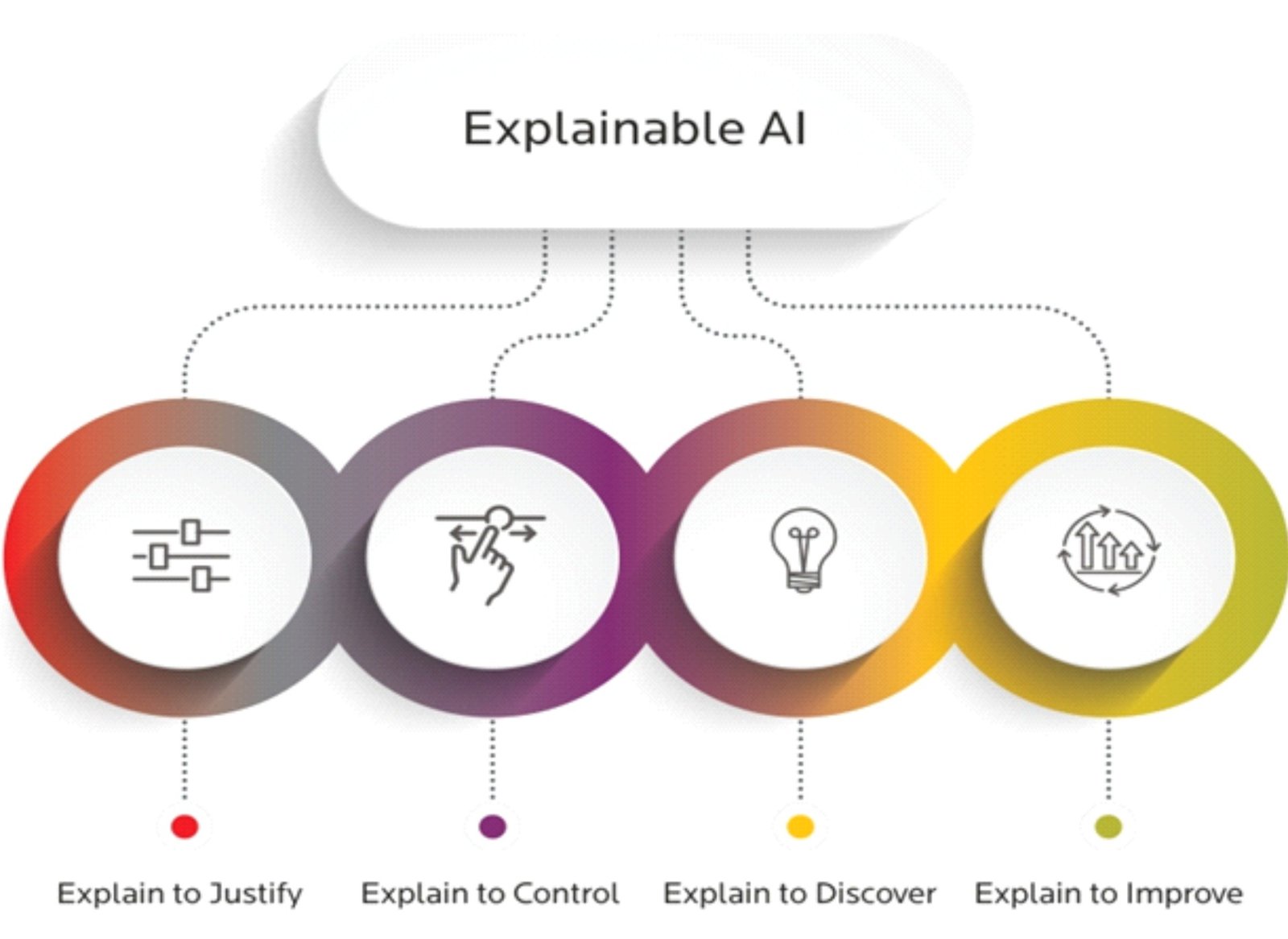

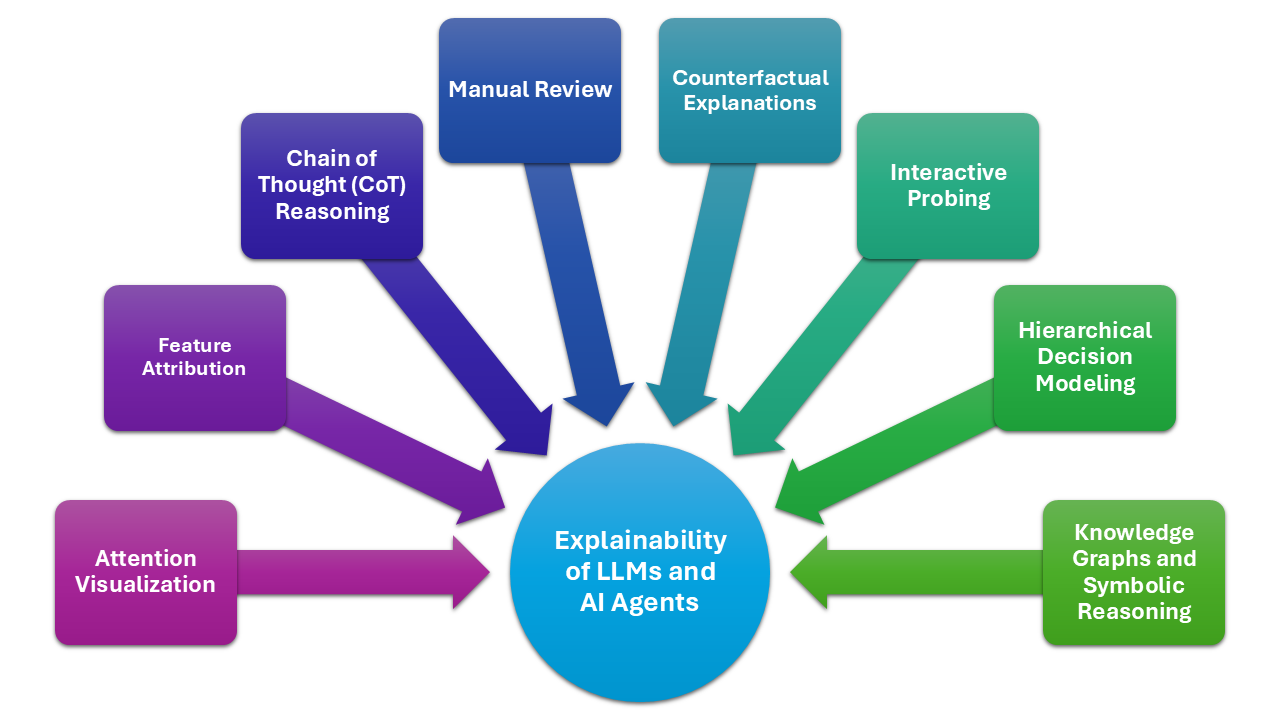

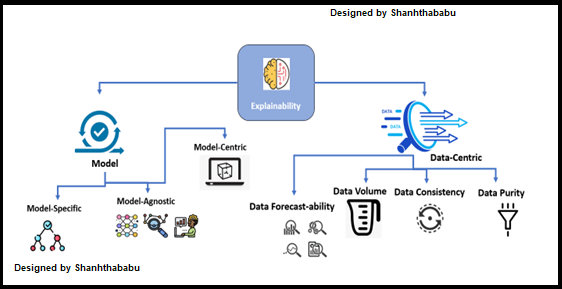

Explanation in AI refers to a collection of procedures and techniques that enable machine learning algorithms to produce output and results that are understandable and reliable for human users. Explainable AI is a key component of the fairness, accountability, and transparency (FAT) machine learning paradigm and is frequently discussed in connection with deep learning

Explainable AI techniques involve providing insights into how and why a particular AI model made a specific decision. This helps build trust and confidence in AI systems, especially in high-stakes applications like finance, healthcare, and transportation. By making AI decisions more interpretable, organizations can ensure that their models are fair, transparent, and responsible