Unlocking the Power of Optional Foundation Tuning: A Guide to Customizing Your AI Models

In the ever-evolving landscape of artificial intelligence, one concept that has gained significant attention is the use of foundation models. These pre-trained models have demonstrated remarkable capabilities across a wide range of tasks, but their immense size poses significant challenges for fine-tuning. However, the concept of optional foundation tuning has emerged as a solution to this problem.

What is Optional Foundation Tuning?

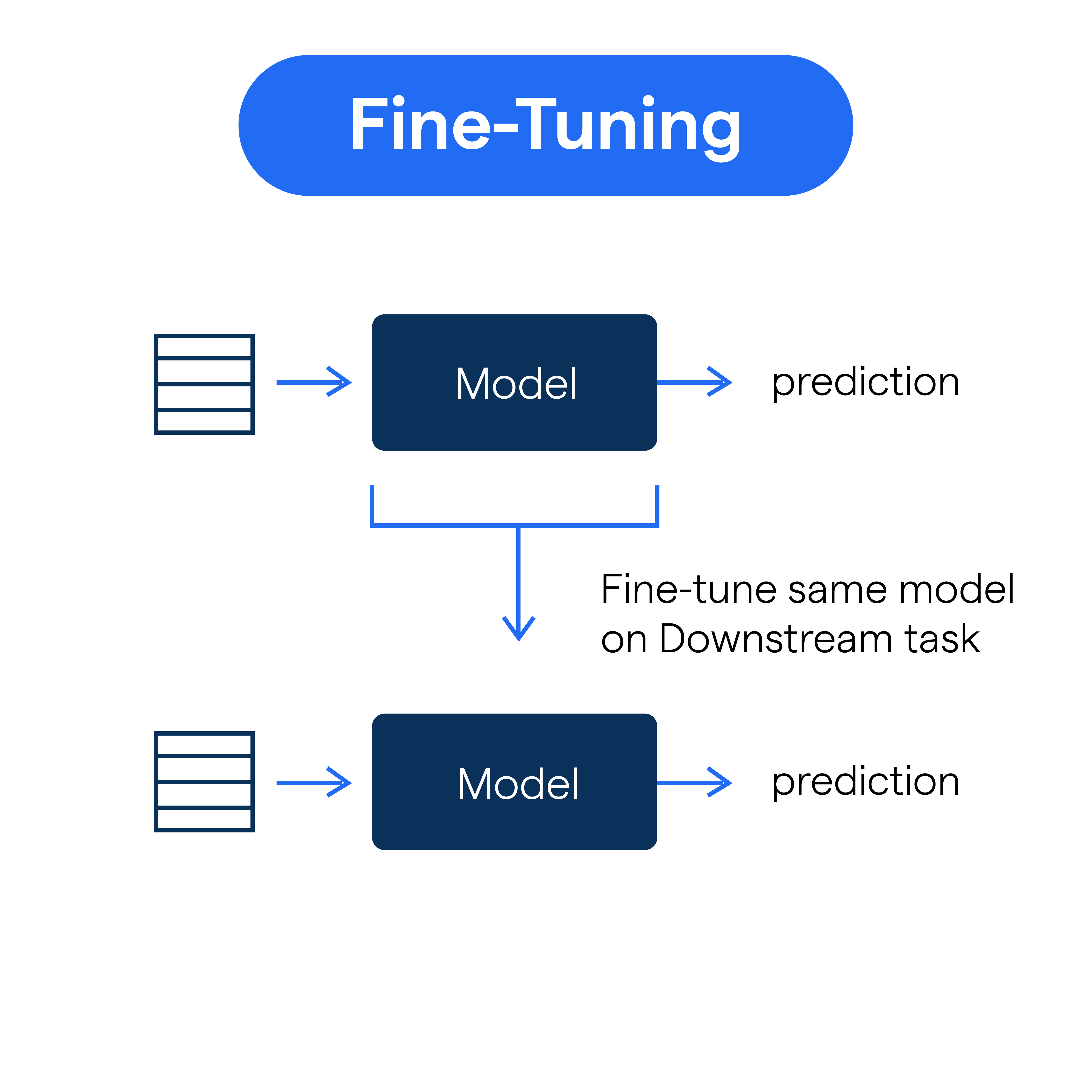

Optional foundation tuning is a method of training and customizing a model by adding custom weights using the optional parameter 'custom_weights_path' in the Foundation Model Fine-Tuning API. This approach allows developers to leverage the broad capabilities of foundation models while customizing them on their own small, task-specific data. The process of fine-tuning involves further training and changing the weights of the model, making it a valuable method for adapting the model to a specific domain or task.

Benefits of Optional Foundation Tuning

- Cost-Effectiveness**: Fine-tuning a pre-trained foundation model is an affordable way to customize a model, reducing the need for large computing resources.

- Flexibility**: Optional foundation tuning allows developers to experiment with different model configurations and fine-tune the model to achieve better results.

- Improved Performance**: Fine-tuning enables the model to specialize in a specific task, enhancing its performance and efficiency.