AI Ethical Risk Management: Navigating the Complexities of Artificial Intelligence Governance

In today's rapidly evolving landscape of technological advancements, Artificial Intelligence (AI) has become an integral part of various industries, from healthcare to finance, and beyond. However, alongside the numerous benefits offered by AI, there are inherent risks and ethical concerns that necessitate proactive management. This article delves into the concept of AI Ethical Risk Management, exploring its importance, key considerations, and the critical role it plays in ensuring the safe and responsible development and deployment of AI systems.

The Evolution of AI Risk Management

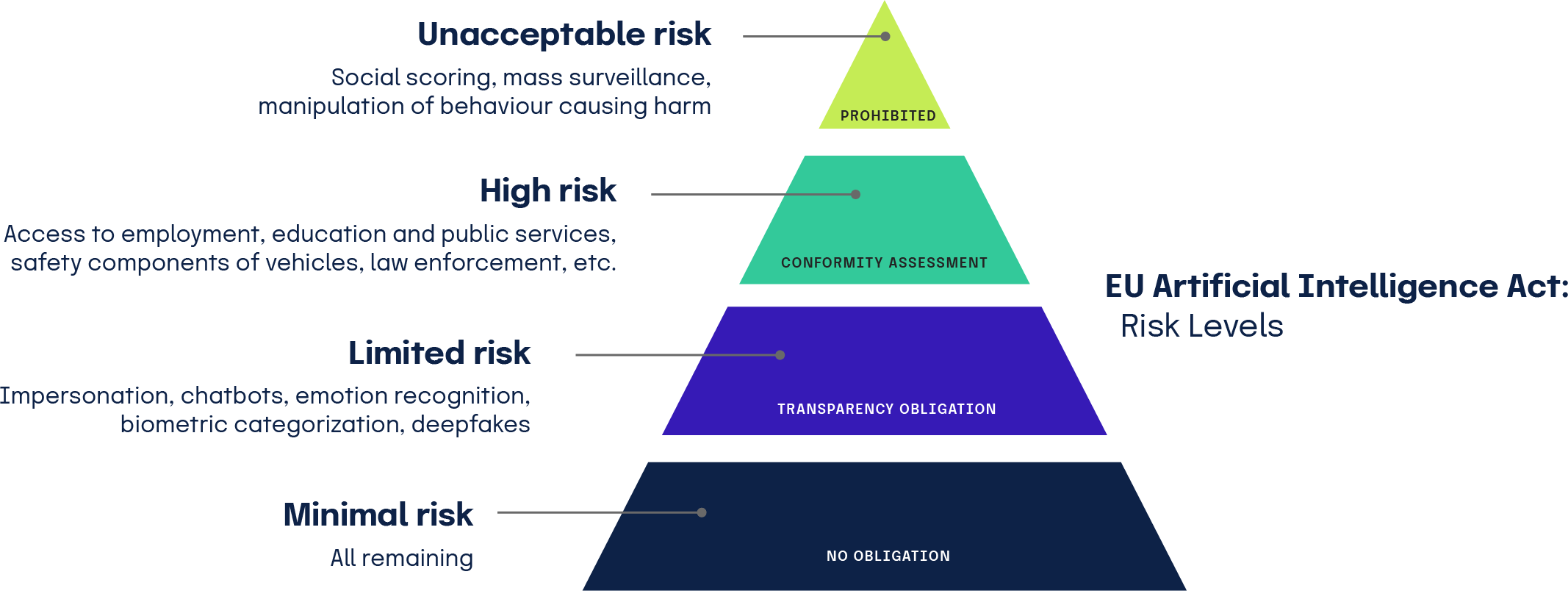

The release of the NIST-AI-600-1, Artificial Intelligence Risk Management Framework: Generative Artificial Intelligence Profile, in July 2024 marked a significant milestone in the field of AI risk management. This framework facilitates the identification and mitigation of risks associated with generative AI, underscoring the importance of proactive measures to address these unique threats. AI risk management is an integral part of the broader field of AI governance, which emphasizes the need for establishing "guardrails" to ensure AI tools and systems operate safely and ethically.

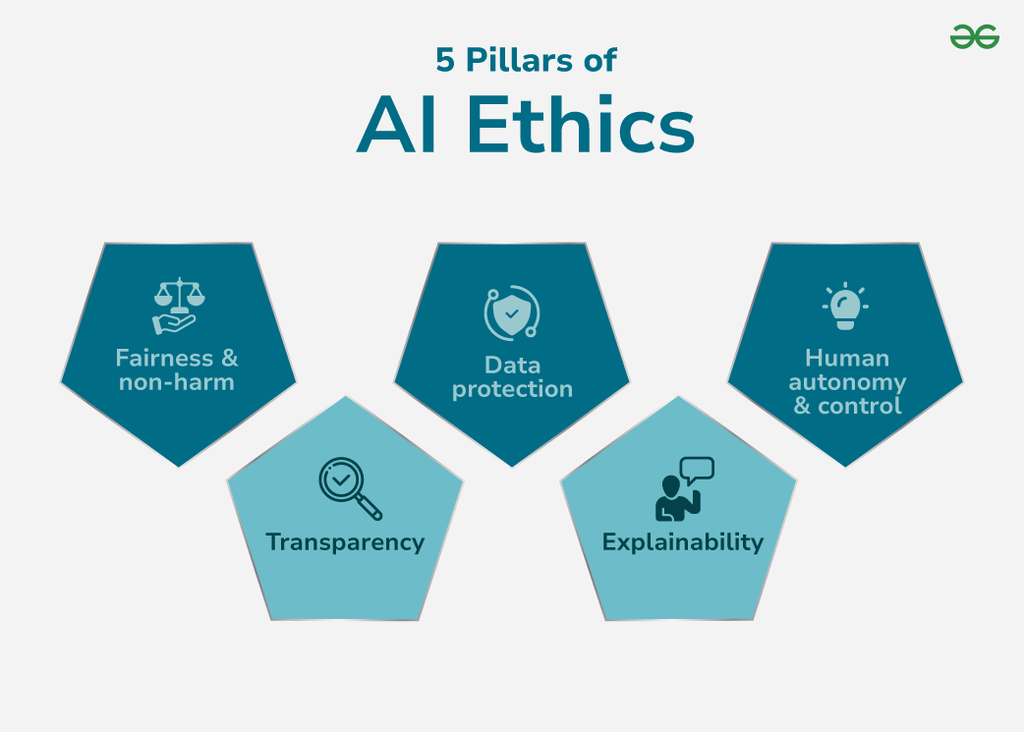

The Concept of Ethical Risk

On the other hand, the concept of ethical risk, a crucial component of AI risk management, often appears in discussions about the responsible development and deployment of AI. However, it remains a term that is loosely defined, clouding the difference between ethical risk and other types of risk such as social, reputational, or legal risks. A clear definition of ethical risk as "rиск that would have a direct or indirect impact on the moral values of stakeholders" is presented in a paper, crucially specifying what distinguishes it from other forms of risk.